In the U.S., it seems like it just turned out that way as a result of a tug-of-war. Still, the conclusion is the same: it’s on hold.

One reason I’m holding off on AI regulation is simply because it’s like playing on extra-hard mode…

Just off the top of my head, here are some of the known hurdles:

Short intro

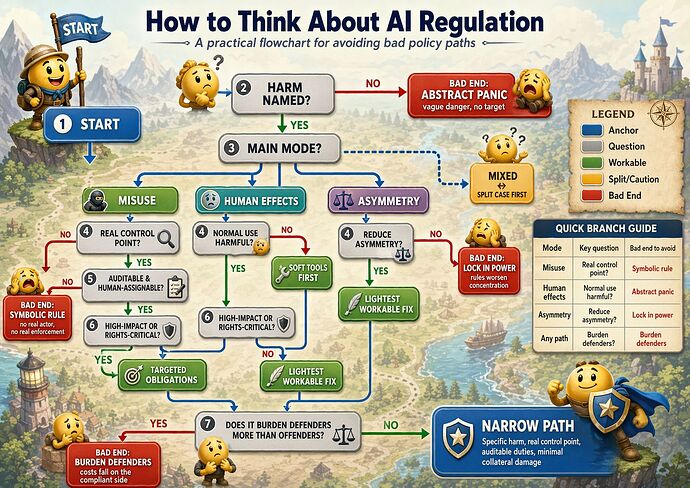

How to use this chart

This chart is not a machine for producing one grand answer to “AI regulation.” It is a filter for avoiding predictable policy mistakes. The point is to slow the discussion down just enough to ask the annoying questions first: what is the harm, who is the actor, where is the control point, what kind of failure mode is this, and will the proposed fix actually reduce harm rather than just look serious.

A lot of bad policy starts when different problems get mixed together. Misuse, ordinary-use human effects, and capability asymmetry are not the same problem, even if they overlap in practice. If those branches are not separated early, the discussion tends to drift toward abstract danger, symbolic rules, or elegant-sounding burdens that mostly fall on the careful side. That is what this chart is trying to prevent. (UNESCO)

The “narrow path” is supposed to feel narrower than people want. It is not the maximal-control path and it is not the maximal-freedom path either. It is the path where the harm is named, the actor is real, the obligations are checkable, and the collateral damage stays bounded. In practice, that usually means the answer is smaller, more specific, and less emotionally satisfying than the first draft people want to write. (UNESCO)

Supplementary table

| Branch |

What the branch is really asking |

Typical bad move |

Better move |

Grounding |

| Misuse |

Is there a real actor, channel, or control point to regulate? |

Regulate the visible, compliant layer and miss the actual attacker. |

Target deployers, distributors, platforms, access points, or other accountable actors. |

NIST risk-management logic and UNESCO’s rule that responsibility must remain attributable to physical persons or existing legal entities. (UNESCO) |

| Human effects |

Can the harm emerge in ordinary use, even without a malicious actor? |

Assume “no attacker” means “no problem.” |

Separate workflow shifts, overreliance, persuasion, and emotional dependence before choosing an intervention. |

Conversational persuasion and affective-use work both support treating ordinary-use effects as a distinct branch. (Nature) |

| Asymmetry |

Does the policy reduce capability asymmetry, or make it worse? |

Slow down the careful side while concentrated players keep the advantage. |

Ask whether the rule preserves defensive access and avoids locking in incumbents. |

OECD highlights concentration risks in AI infrastructure and downstream markets. (OECD) |

| Rights-critical use |

Is this merely useful software, or is it operating in a rights-sensitive setting? |

Apply the same rule to everything. |

Reserve stronger obligations for high-impact, high-rights, or high-safety uses. |

EU AI Act deployer duties and fundamental-rights impact assessment logic. (The E.U.A. Act of Human Intelligence.) |

| Auditability |

Can anyone verify compliance in practice? |

Write a rule that sounds serious but cannot be checked. |

Prefer duties that can be documented, assigned, monitored, and reviewed. |

NIST’s measure/manage logic and EU lifecycle risk-management requirements. (UNESCO) |

| Release stage |

Is the intervention happening before public release, or after? |

Assume you can “undo” a widely released capability later. |

Be stricter where rollback is weak or impossible. |

The International AI Safety Report treats public release mode, especially open-weight release, as a major factor in risk management. (International AI Safety Report) |

| Narrow path |

Does the intervention reduce harm without creating larger collateral damage? |

Treat “doing something” as success by default. |

Prefer the lightest intervention that names the harm, hits a real control point, and stays auditable. |

This is the common thread across NIST, UNESCO, and the EU’s targeted obligations. (UNESCO) |

Long-form notes

1. Start with the harm, not the vibe

Here is the first thing that keeps going wrong in AI policy talk: people say this is dangerous long before they say what gets worse, for whom, and by what path. Once that happens, the conversation starts floating. It turns into mood, branding, tribal signaling, or panic. The boring move is still the right one: name the harm first. If you cannot do that, you probably do not yet have a policy argument. You have a reaction. NIST’s framework is useful precisely because it keeps dragging the discussion back toward context, impact, and measurement instead of leaving it at slogan level. (UNESCO)

2. Not every AI problem is the same problem

A lot of arguments get muddy because three different things are being treated as one. One is malicious use: fraud, abuse, exploitation, strategic misuse. Another is ordinary-use human effects: overreliance, persuasion, dependency, and the quiet way a tool can start reshaping habits and judgment. The third is capability asymmetry: who gets the stronger tools, who gets to ignore the rules, and who ends up carrying the cost. If you do not split those apart early, you get elegant moral language attached to the wrong control point. That is how people end up fighting the wrong battle with the wrong tool. (UNESCO)

3. A rule without an actor is mostly theatre

This is where a lot of “AI regulation” talk becomes decorative. If you cannot identify a real human or legal actor who can actually be assigned duties, monitored, and corrected, then your rule is drifting toward theatre. UNESCO is unusually plain about this: responsibility must remain attributable to physical persons or existing legal entities, and AI systems should not be given legal personality themselves. That may sound obvious, but policy language forgets it all the time. Once the real actor disappears, the draft starts sounding grand and governing very little. (UNESCO)

4. “No attacker” does not mean “no harm”

One of the easiest mistakes is to assume that if no bad actor is present, the system is basically neutral. That is not how these systems work in practice. A tool can be useful and still quietly pull on attention, confidence, style, dependency, or judgment. A workflow shift is not automatically a crisis, but it is also not nothing. The more interactive and personalized the system becomes, the more it can shape the user even in ordinary use. Recent work found that GPT-4 could be more persuasive than humans in some debate settings, especially when personal information was available, and other work found that openly available LLM-generated messages could influence political attitudes. OpenAI and MIT researchers also studied affective use and emotional well-being in large-scale real-world chatbot use. None of that means every session is corrosive. It does mean the ordinary-use branch deserves to exist. (Nature)

5. If your policy helps the strong and burdens the careful, you missed

This is the branch people tend to underrate because it sounds less dramatic than safety or manipulation. But asymmetry is where a lot of bad policy quietly does its damage. A rule can be strict, serious, and well-intentioned and still make the overall situation worse if it mainly slows down the compliant side while concentrated firms, states, or determined abusers keep the meaningful advantage. OECD’s recent work on AI infrastructure and downstream markets is useful here because it puts concentration, access, restrictiveness, and market power on the table. In plain English: a policy can fail by making the already-strong harder to challenge. That is a real failure mode, even if the document looks responsible on paper. (OECD)

6. Strong rules belong where the stakes are actually strong

The instinct to say regulate AI in the abstract is understandable, but it is not very disciplined. The EU AI Act is helpful here because it does not treat all uses as the same. It places stronger obligations where health, safety, public services, employment, or fundamental rights are implicated, and it requires certain deployers of high-risk systems to conduct fundamental-rights impact assessments. That is a more serious move than just saying we care about rights. It asks: where are the stakes high enough that context-specific duties are justified? That is a much better question. (The E.U.A. Act of Human Intelligence.)

7. If nobody can check it, do not call it governance

This is not cynical. It is just an old institutional lesson. Rules that cannot be verified have a way of becoming ceremony. NIST’s emphasis on measuring and managing risk, and the EU’s emphasis on lifecycle obligations, monitoring, and documented risk management, all point in the same direction: if a duty matters, someone must be able to inspect it, test it, document it, or enforce it. Otherwise the draft is likely to function as reassurance for insiders and decoration for outsiders. That is not zero value, maybe, but it is not governance in the strong sense. (UNESCO)

8. Some interventions are easier to reverse than others

This matters more than people like to admit. It is one thing to constrain deployment in a narrow context. It is another thing to release a capability widely and then discover later that rollback is weak, partial, or practically impossible. The International AI Safety Report treats release mode, especially open-weight release, as an important factor in evaluating risk. Open-weight access can support research, transparency, and innovation, but it also changes how easily capabilities can be adapted, redistributed, and controlled after release. So timing matters. If the relevant control point is upstream, pretending you can fix it neatly downstream may just be wishful thinking dressed up as policy patience. (International AI Safety Report)

9. The narrow path is supposed to feel a little boring

If the chart is doing its job, the final good outcome should not feel heroic so much as disciplined. The narrow path is not maximal control, maximal openness, or the strongest moral posture. It is the path where the harm is specific, the actor is real, the duty is auditable, the burden is not dumped mainly on defenders, and the collateral damage stays bounded. In other words, the narrow path looks smaller because it is smaller. It avoids both the emotional satisfaction of sweeping solutions and the laziness of doing nothing. That is probably why it is narrower. (UNESCO)

10. Why the chart keeps asking annoying questions

The chart is intentionally repetitive. It keeps asking whether the harm is named, whether the actor is real, whether the use is ordinary or malicious, whether the setting is rights-critical, whether the rule is auditable, whether the intervention worsens asymmetry, and whether the burden falls on defenders. That repetition is not indecision. It is the point. In this area, a lot of bad effort comes from skipping those checks because the destination feels morally obvious in advance. The chart is there to slow that reflex down just enough to avoid the more predictable mistakes. (UNESCO)

Optional closing line

The goal is not to find a perfect rule for “AI.” The goal is to avoid wasting effort on policies that cannot name the harm, cannot find the actor, cannot be checked, or quietly make the strong stronger.