Instructions to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- llama-cpp-python

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="mudler/Qwen3.6-35B-A3B-APEX-GGUF", filename="Qwen3.6-35B-A3B-APEX-Balanced.gguf", )

llm.create_chat_completion( messages = "No input example has been defined for this model task." )

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF # Run inference directly in the terminal: llama-cli -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF # Run inference directly in the terminal: llama-cli -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF # Run inference directly in the terminal: ./llama-cli -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF # Run inference directly in the terminal: ./build/bin/llama-cli -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF

Use Docker

docker model run hf.co/mudler/Qwen3.6-35B-A3B-APEX-GGUF

- LM Studio

- Jan

- Ollama

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with Ollama:

ollama run hf.co/mudler/Qwen3.6-35B-A3B-APEX-GGUF

- Unsloth Studio new

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for mudler/Qwen3.6-35B-A3B-APEX-GGUF to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for mudler/Qwen3.6-35B-A3B-APEX-GGUF to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for mudler/Qwen3.6-35B-A3B-APEX-GGUF to start chatting

- Pi new

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with Pi:

Start the llama.cpp server

# Install llama.cpp: brew install llama.cpp # Start a local OpenAI-compatible server: llama-server -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF

Configure the model in Pi

# Install Pi: npm install -g @mariozechner/pi-coding-agent # Add to ~/.pi/agent/models.json: { "providers": { "llama-cpp": { "baseUrl": "http://localhost:8080/v1", "api": "openai-completions", "apiKey": "none", "models": [ { "id": "mudler/Qwen3.6-35B-A3B-APEX-GGUF" } ] } } }Run Pi

# Start Pi in your project directory: pi

- Hermes Agent new

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with Hermes Agent:

Start the llama.cpp server

# Install llama.cpp: brew install llama.cpp # Start a local OpenAI-compatible server: llama-server -hf mudler/Qwen3.6-35B-A3B-APEX-GGUF

Configure Hermes

# Install Hermes: curl -fsSL https://hermes-agent.nousresearch.com/install.sh | bash hermes setup # Point Hermes at the local server: hermes config set model.provider custom hermes config set model.base_url http://127.0.0.1:8080/v1 hermes config set model.default mudler/Qwen3.6-35B-A3B-APEX-GGUF

Run Hermes

hermes

- Docker Model Runner

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with Docker Model Runner:

docker model run hf.co/mudler/Qwen3.6-35B-A3B-APEX-GGUF

- Lemonade

How to use mudler/Qwen3.6-35B-A3B-APEX-GGUF with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull mudler/Qwen3.6-35B-A3B-APEX-GGUF

Run and chat with the model

lemonade run user.Qwen3.6-35B-A3B-APEX-GGUF-{{QUANT_TAG}}List all available models

lemonade list

⚡ Each donation = another big MoE quantized

I host 25+ free APEX MoE quantizations as independent research. My only local hardware is an NVIDIA DGX Spark (122 GB unified memory) — enough for ~30-50B-class MoEs, but bigger ones (200B+) require rented compute on H100/H200/Blackwell, typically $20-100 per quant.

If APEX quants are useful to you, your support directly funds those bigger runs.

🎉 Patreon (Monthly) | ☕ Buy Me a Coffee | ⭐ GitHub Sponsors

💚 Big thanks to Hugging Face for generously donating additional storage — much appreciated.

Qwen 3.6 35B-A3B APEX GGUF

APEX (Adaptive Precision for EXpert Models) quantizations of Qwen/Qwen3.6-35B-A3B.

Brought to you by the LocalAI team | APEX Project | Technical Report

Benchmark Results

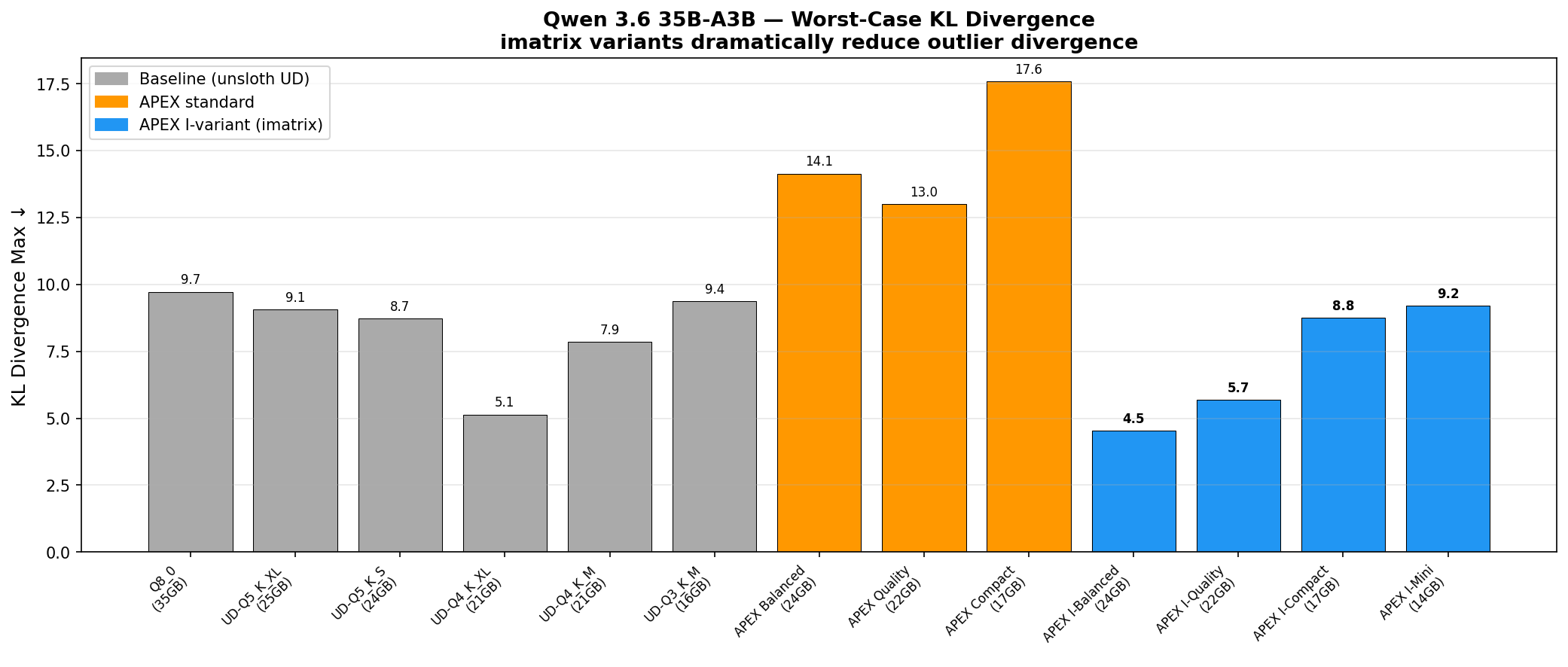

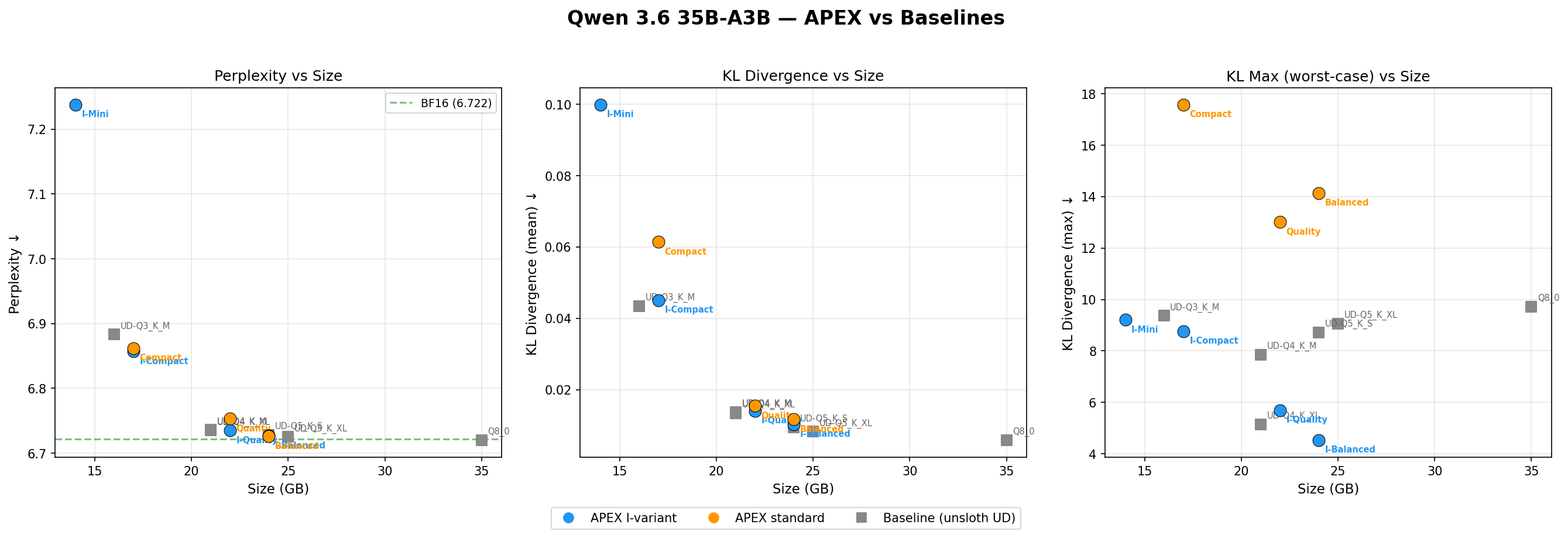

All benchmarks run with llama.cpp b8797 on NVIDIA GB10 (122 GB VRAM). Perplexity and KL divergence measured on wikitext-2. HellaSwag zero-shot (400 tasks). KL divergence computed against BF16 reference logits.

APEX vs Baselines (unsloth UD quants)

| Model | Size | PPL ↓ | KL mean ↓ | KL median ↓ | KL max ↓ | HellaSwag ↑ |

|---|---|---|---|---|---|---|

| BF16 (reference) | 65 GB | 6.722 | — | — | — | — |

| Q8_0 | 35 GB | 6.720 | 0.0059 | 0.0022 | 9.72 | 82.5% |

| UD-Q5_K_XL | 25 GB | 6.725 | 0.0083 | 0.0030 | 9.06 | 82.8% |

| UD-Q5_K_S | 24 GB | 6.728 | 0.0095 | 0.0035 | 8.72 | 82.8% |

| APEX I-Balanced | 24 GB | 6.727 | 0.0103 | 0.0041 | 4.53 | 83.0% |

| APEX Balanced | 24 GB | 6.726 | 0.0117 | 0.0047 | 14.14 | 83.0% |

| APEX I-Quality | 22 GB | 6.735 | 0.0141 | 0.0054 | 5.69 | 82.5% |

| APEX Quality | 22 GB | 6.753 | 0.0155 | 0.0060 | 13.01 | 82.8% |

| UD-Q4_K_XL | 21 GB | 6.735 | 0.0134 | 0.0050 | 5.14 | 82.3% |

| UD-Q4_K_M | 21 GB | 6.736 | 0.0138 | 0.0054 | 7.86 | 83.3% |

| APEX I-Compact | 17 GB | 6.857 | 0.0451 | 0.0182 | 8.76 | 83.5% |

| APEX Compact | 17 GB | 6.862 | 0.0614 | 0.0261 | 17.58 | 83.3% |

| UD-Q3_K_M | 16 GB | 6.883 | 0.0435 | 0.0163 | 9.37 | 82.8% |

| APEX I-Mini | 14 GB | 7.238 | 0.0999 | 0.0414 | 9.21 | 82.8% |

Highlights

- APEX I-Balanced (24 GB) achieves the lowest KL max (4.53) of any quant tested — even lower than Q8_0 (9.72). The imatrix dramatically reduces worst-case divergence while matching UD-Q5_K_S on perplexity.

- At 17 GB, APEX I-Compact beats UD-Q3_K_M (16 GB) on PPL (6.857 vs 6.883) and HellaSwag (83.5% vs 82.8%).

- imatrix consistently halves KL max: I-Balanced 4.53 vs Balanced 14.14, I-Quality 5.69 vs Quality 13.01.

- APEX I-Mini (14 GB) delivers usable quality (PPL 7.24, HellaSwag 82.8%) in the smallest package.

Available Files

| File | Profile | Size | Best For |

|---|---|---|---|

| Qwen3.6-35B-A3B-APEX-I-Balanced.gguf | I-Balanced | 24 GB | Best overall — lowest KL max of any quant |

| Qwen3.6-35B-A3B-APEX-I-Quality.gguf | I-Quality | 22 GB | Highest quality with imatrix, 2 GB smaller |

| Qwen3.6-35B-A3B-APEX-Quality.gguf | Quality | 22 GB | Highest quality standard |

| Qwen3.6-35B-A3B-APEX-Balanced.gguf | Balanced | 24 GB | General purpose |

| Qwen3.6-35B-A3B-APEX-I-Compact.gguf | I-Compact | 17 GB | Consumer GPUs, beats UD-Q3_K_M quality |

| Qwen3.6-35B-A3B-APEX-Compact.gguf | Compact | 17 GB | Consumer GPUs |

| Qwen3.6-35B-A3B-APEX-I-Mini.gguf | I-Mini | 14 GB | Smallest viable, fastest inference |

| mmproj.gguf | Vision projector | ~1 GB | Required for image understanding |

What is APEX?

APEX is a quantization strategy for Mixture-of-Experts (MoE) models. It classifies tensors by role (routed expert, shared expert, attention) and applies a layer-wise precision gradient — edge layers get higher precision, middle layers get more aggressive compression. I-variants use diverse imatrix calibration (chat, code, reasoning, tool-calling, agentic traces, Wikipedia).

The key insight: in MoE models, expert FFN tensors make up the bulk of model weight but only ~8/256 experts activate per token. APEX compresses middle-layer experts more aggressively while preserving edge layers (first/last 5) and keeping attention, SSM/Mamba, and shared expert tensors at higher precision.

See the APEX project for full details, technical report, and scripts.

Architecture

- Model: Qwen 3.6 35B-A3B (Qwen/Qwen3.6-35B-A3B)

- Layers: 40

- Experts: 256 routed + shared (8 active per token)

- Total Parameters: ~35B

- Active Parameters: ~3B per token

- Attention: Hybrid (full attention every 4th layer, linear/Mamba otherwise)

- Vision: Built-in vision encoder (mmproj included)

- APEX Config: 5+5 symmetric edge gradient across 40 layers

- Calibration: v1.3 diverse dataset (chat, code, reasoning, multilingual, tool-calling, Wikipedia)

- llama.cpp: Built with b8797

Run with LocalAI

local-ai run mudler/Qwen3.6-35B-A3B-APEX-GGUF@Qwen3.6-35B-A3B-APEX-I-Balanced.gguf

Credits

APEX is brought to you by the LocalAI team. Developed through human-driven, AI-assisted research. Built on llama.cpp.

- Downloads last month

- 225,509

We're not able to determine the quantization variants.

Model tree for mudler/Qwen3.6-35B-A3B-APEX-GGUF

Base model

Qwen/Qwen3.6-35B-A3B